Automatic speech recognition (ASR) solutions are not new. We are already familiar with Amazon’s Alexa and Apple’s Siri. However, none of them have triggered the anticipated revolution in how we interact with computers - shifting us from writing to speaking.

Speaking of revolution in ASR, Whisper from OpenAI has been gaining attention with its impressive 95% to 98.5% accuracy rate (without manual intervention). It is a speech-to-text model that uses machine learning to not only understand the words but also grasp the context and finer points of spoken language. This is a major step forward from traditional speech recognition systems.

Its accuracy coupled with other features make OpenAI Whisper an important tool for various applications that require accurate and efficient speech to text conversion – speech translation, voice activity detection, multilingual speech recognition, and more.

Whisper OpenAI as a Speech-to-Text Solution

OpenAI Whisper is great at recognizing a variety of accents, background noises, and even technical jargons. It supports more than 57 languages (Afrikaans, Czech, Galician, etc.) including English, and can translate content from 99 languages to English. It was trained on 680K hours of audio and text datasets, most of them being TED Talks, clips, podcasts, and interviews.

Focusing on the price point, the OpenAI speech-to-text model Whisper is relatively cheap compared to other solutions. It costs $0.006 to transcribe or translate a 1-minute audio. Considering the price and accuracy, businesses are incorporating Whisper transcription online solutions for a variety of use cases. In terms of accuracy, Whisper is also exceptional at providing fully structured texts with the right syntax and punctuation. Once again, there is little human intervention needed.

Apart from this, Whisper is available as an open-source model, free for commercial use. Consequently, businesses or developers can integrate it into their own pipeline and modify it according to their use cases without depending on OpenAI. A pre-trained foundation architecture makes room for innovation and enhances Whisper’s transcription and translation abilities. For instance, a business offering medical transcription services can fine-tune Whisper to recognize medical terms, specifically. Lastly, Whisper can work real-time, making it ideal for time-critical scenarios.

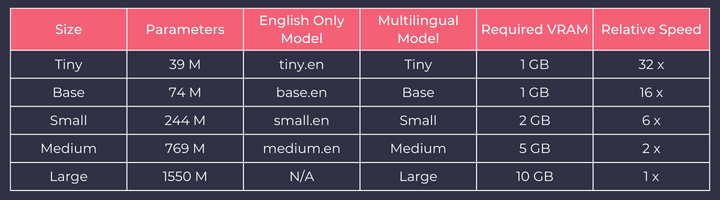

Whisper comes in five different models. The largest model labelled ‘large’ offers the highest level of accuracy. The highest level of accuracy does not imply that you need the largest model available. Businesses have to make their choice according to their requirements.

If you don’t want to install, configure, or maintain it yourself, you can opt for a managed Whisper service or hosted Whisper service offered by companies that run their own Whisper clusters on cloud or on-premise infrastructure.

Whisper is compatible with both GPUs (graphics processing units) and CPUs (central processing units) when considering hardware adaptability. However, it is much faster on GPU than CPU, with lower inference times and higher accuracy. Meaning, the audio is processed quickly, and you get the results in a swift manner. So, Whisper’s smaller models can be used on CPUs or for testing larger models.

The Top Use Cases of OpenAI Whisper as an ASR System

Whisper comes with a lot of benefits. It can translate speech into different languages, identify the language of speech, and perform various tasks based on speech commands. So, it should come as no surprise that it can be used in a variety of use cases or scenarios in different domains.

Voice Search

There is a growing trend in voice search. About 50% of people and more than half of smart phone users prefer engaging with voice technology to search for information. It's clear the business landscape is changing. To remain competitive, companies need to ensure their content is visible on voice search results as well. In this case, Whisper can be used to transcribe and understand voice queries.

Customer Support

Businesses can develop their own voice assistants to help customers to address a range of queries or services. As a business, Whisper can be used to transcribe and analyze customer calls to figure out what they are asking for the most, ultimately improving their product offerings. From a customer perspective, it’s all about convenience. It can be used to find information, make reservations, buy products, etc.

Transcription

Whisper voice recognition model’s key purpose is to transcribe audios. It can be used in meetings, interviews, or conference calls. For example, in healthcare, it can be used to transcribe patient records and medical dictations. In legal documentation, it can be used to bolster cases, review important data, or create effective legal papers. But in terms of transcription, its purpose is too broad and can be used in many ways. Simply put, Whisper will make sure no valuable information is lost and that’s something any business would appreciate.

Break Down Communication Barriers

Offering accessibility and building an inclusive customer base is crucial for business success. Automating transcription can be beneficial for customers with hearing impairments. They can review the spoken content in written or text format. This will not only enhance the brand's reputation but also expand the potential customer base.

Language Learning

One of the most interesting and disruptive use cases of Whisper – creating interactive and immersive learning experiences for learners of different levels. Whether it's real-world conversations or accent training, Whisper can be exceptional in everyday language use. Since it is a flexible tool, different learning paths can be created for different users (beginner, intermediate, or advanced).

Voice Verification

Whisper is capable of recognizing a person’s voice, accent, tone, and speech patterns. This plays a critical role in voice biometrics and authentication in various sectors – banking, healthcare, law enforcement, or simply where secure transactions are essential. With Whisper, businesses can create a voice profile or voiceprints (like fingerprints) to identify a user or individual. So, when a user tries to access a system, the authentication system can accept or deny the request based on the match.

To Cap it Off,

Recently, in an attempt to resolve an edge case, OpenAI came up with an update to fix audio files with no speech detected. This can happen due to faulty microphones, corrupted files, or user error. Therefore, when there is no speech detected, Whisper will return the empty transcription.

OpenAI is consistently upgrading Whisper to ensure reliability and robustness. It continues to be one of the leading ASR or speech-to-text solutions. With it, businesses as well as individuals can focus more on their productivity rather than typing.

Transcribe audio with high accuracy (up to 95%) in real-time with Floatbot NEO – ASR-as-as-Service. It incorporates deep-tech, speech-to-text or ASR (automatic speech recognition) functionalities in your voice-based applications. It is supported on both cloud and on-premise. We follow a pay-per-API pricing model for your convenience. You can also try Whisper AI demo for free in real-time on our no-code platform.